Requirements

Introduction

Purpose

This SRS defines MVP requirements for AirGap Transfer, a command-line utility for safely transferring large files across air-gap boundaries.

Scope

Product: AirGap Transfer — a minimal CLI tool for chunked file transfers via removable media.

In Scope:

Split large datasets into chunks for USB transfer

Reconstruct files from chunks with integrity verification

Resume interrupted transfers

Cross-platform support (macOS, Windows, Linux)

Out of Scope:

Network transfers, cloud sync, auto-updates

Compression or encryption (defer to post-MVP; cryptographic agility for hashing is in scope)

GUI interface

Real-time synchronization

Ollama-specific logic (general-purpose only)

Definitions

Term |

Definition |

|---|---|

Air-gap |

Physical separation between systems with no network connectivity |

Chunk |

A fixed-size tar archive containing a portion of source data |

Pack |

Operation to split source files into chunks |

Unpack |

Operation to reconstruct files from chunks |

Manifest |

Metadata file describing chunk inventory and checksums |

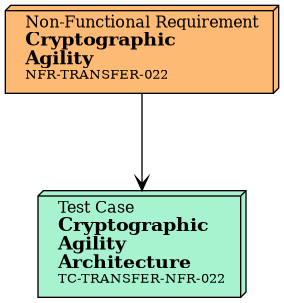

Cryptographic agility |

Ability to swap hash algorithms without rearchitecting the system |

Overall Description

Product Perspective

Standalone CLI tool for transferring data across air-gap boundaries using removable media. All operations occur locally with no network connectivity. See the Software Design Document for architecture diagrams and component details.

Constraints

Constraint |

Description |

|---|---|

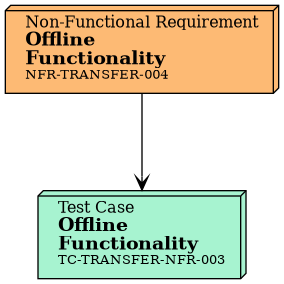

Offline-only |

Zero network calls at runtime |

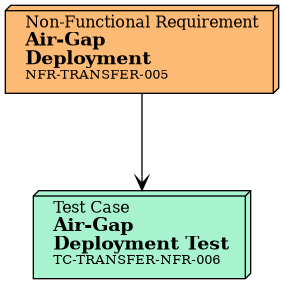

Air-gap ready |

Deployable without internet access |

Platforms |

macOS, Windows, Linux |

UI model |

Command-line interface only (no GUI) |

Media |

Works with standard removable media (USB, external drives) |

Assumptions and Dependencies

User assumptions:

Operators have basic command-line literacy and can navigate filesystems.

Operators understand the air-gap transfer workflow: pack on the source machine, physically move USB media, unpack on the destination machine.

Operators have write access to both the source directory and the USB media.

System assumptions:

At least one USB drive or removable storage device is available and mounted at a standard OS mount point (

/Volumes/*on macOS,/media/$USER/*or/mnt/*on Linux, drive letters on Windows).The filesystem on the destination media supports files up to the configured chunk size (e.g., FAT32 has a 4 GB limit).

Power remains stable during write operations. Interrupted writes are handled via the resume mechanism, but data written during a power loss may be corrupt.

Build dependencies:

Rust toolchain (stable channel) for compilation.

cargo vendorfor offline/air-gap builds when crates.io is not reachable.Python 3.10+ and Sphinx for documentation builds.

Runtime dependencies:

No network access required or expected. The binary is fully self-contained.

No external libraries at runtime — statically linked Rust binary.

Functional Requirements

Pack Operation

ID |

Priority |

Title |

|---|---|---|

must |

Split Files into Chunks |

|

must |

Auto-Detect USB Capacity |

|

must |

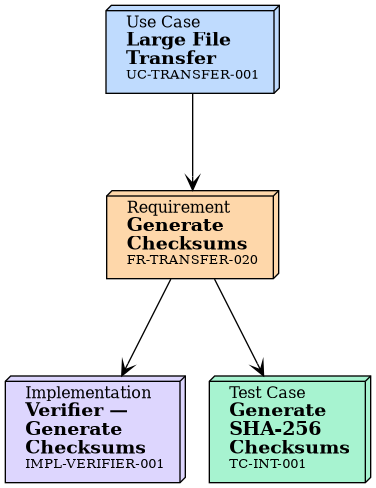

Generate Chunk Checksums |

|

must |

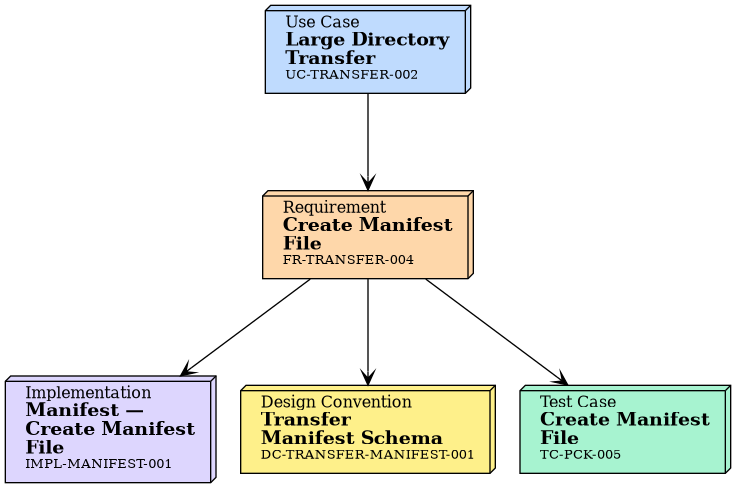

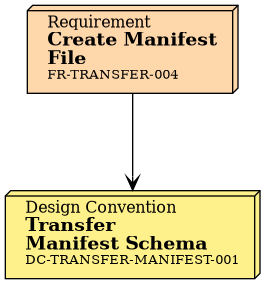

Create Manifest File |

|

must |

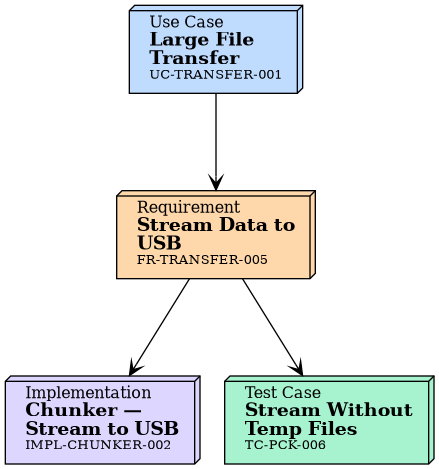

Stream Data to USB |

|

should |

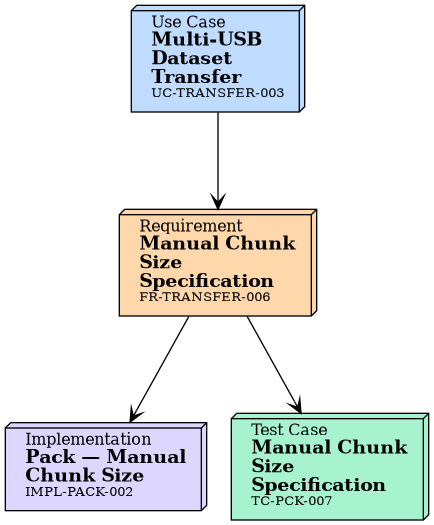

Manual Chunk Size Specification |

|

should |

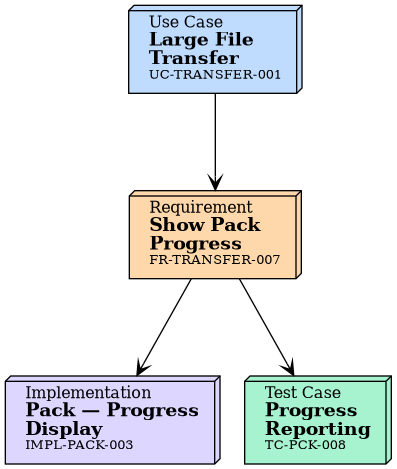

Show Pack Progress |

|

should |

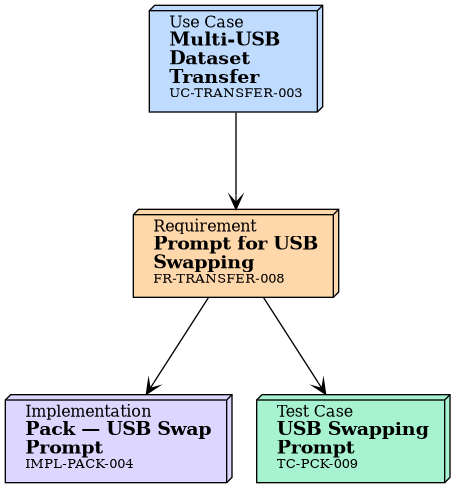

Prompt for USB Swapping |

|

should |

Resume Interrupted Pack |

|

must |

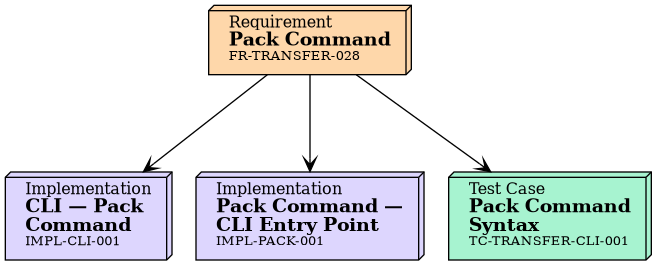

Pack Command |

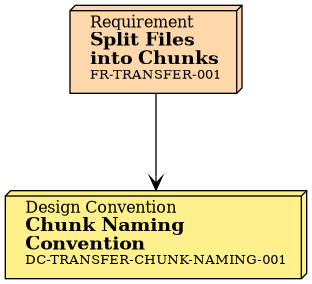

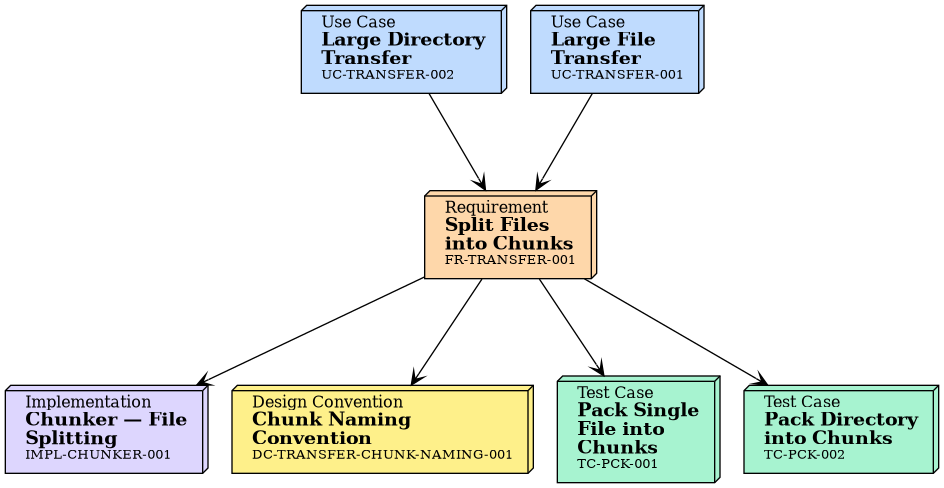

Split source files/directories into fixed-size chunks. See DC-TRANSFER-CHUNK-NAMING-001 for chunk naming conventions. |

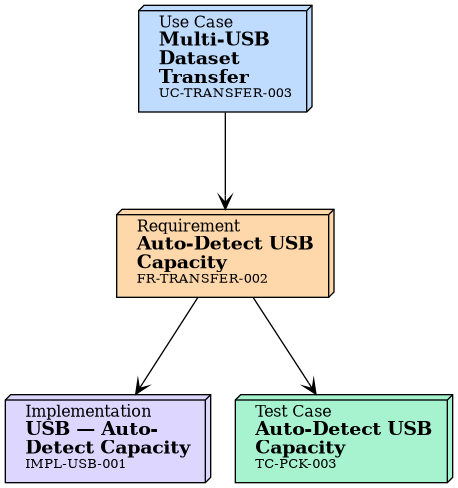

Auto-detect USB capacity and set chunk size accordingly |

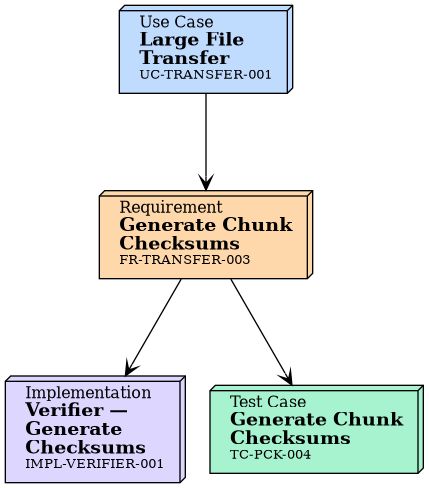

Generate checksums for each chunk using the configured hash algorithm (default: SHA-256) |

Create manifest file with chunk metadata and checksums. See DC-TRANSFER-MANIFEST-001 for the manifest schema. |

Stream data directly to USB without intermediate temp files |

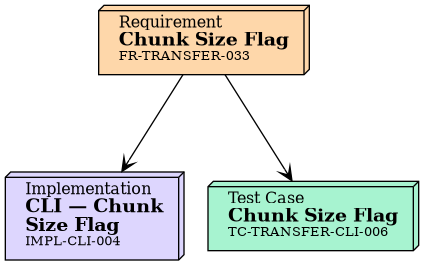

Support manual chunk size specification |

Show progress during chunk creation |

Prompt for USB swapping when multiple chunks needed |

Unpack Operation

ID |

Priority |

Title |

|---|---|---|

must |

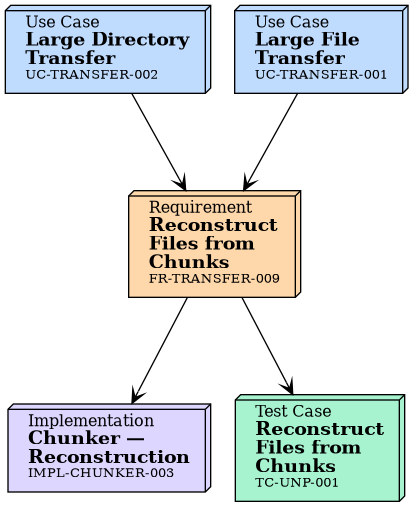

Reconstruct Files from Chunks |

|

must |

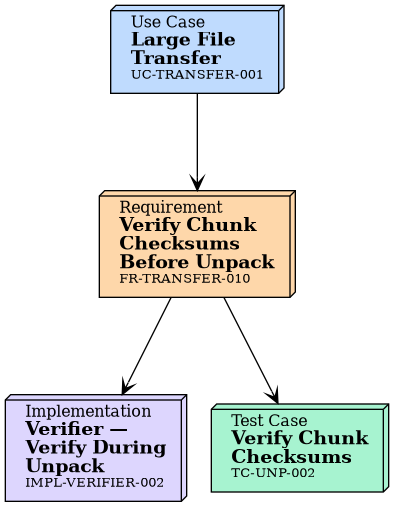

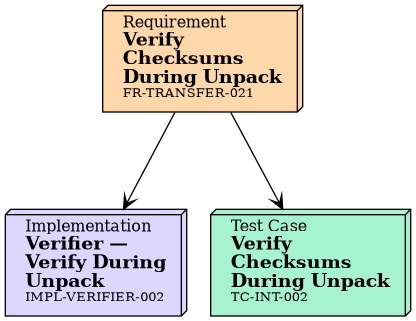

Verify Chunk Checksums Before Unpack |

|

must |

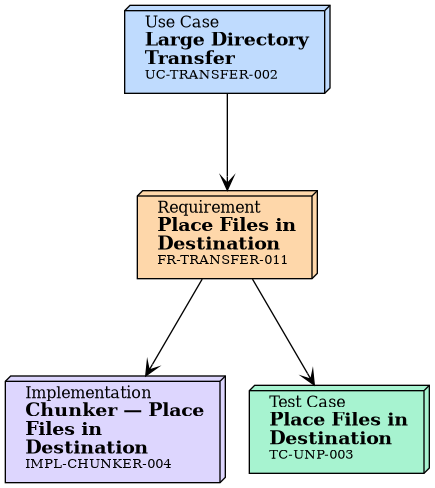

Place Files in Destination |

|

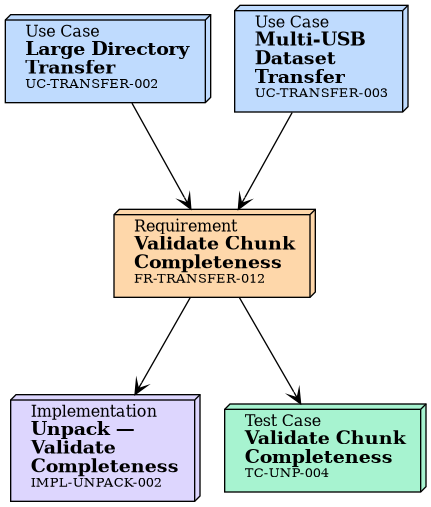

must |

Validate Chunk Completeness |

|

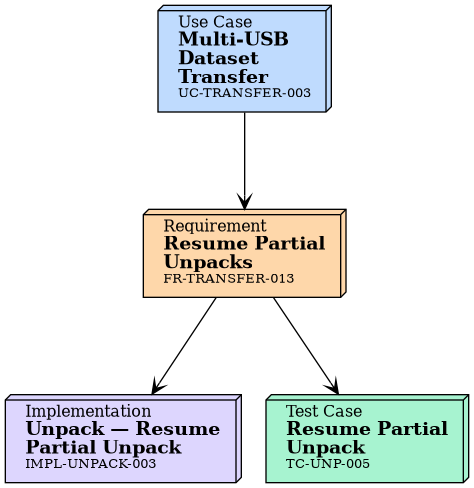

should |

Resume Partial Unpacks |

|

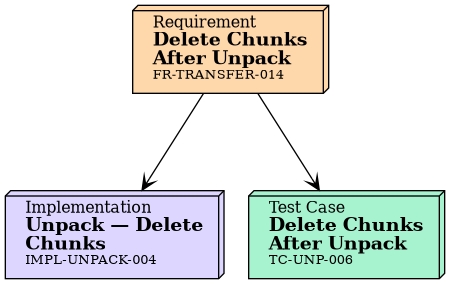

should |

Delete Chunks After Unpack |

|

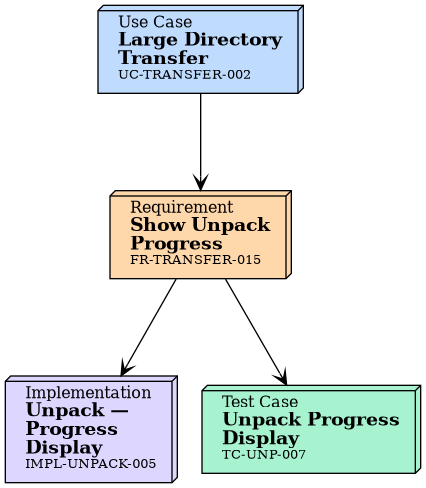

should |

Show Unpack Progress |

|

should |

Resume Interrupted Unpack |

|

must |

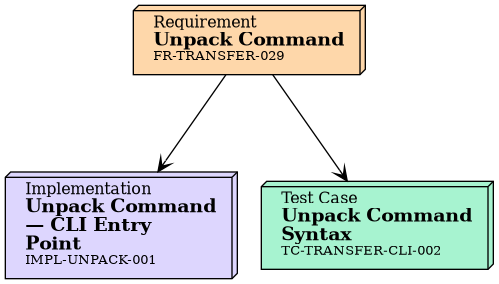

Unpack Command |

Reconstruct original files from chunks |

Verify chunk checksums before reconstruction |

Place reconstructed files in specified destination |

Validate chunk completeness (all chunks present) |

Resume partial unpacks if interrupted |

Optionally delete chunks after successful reconstruction |

Show progress during reconstruction |

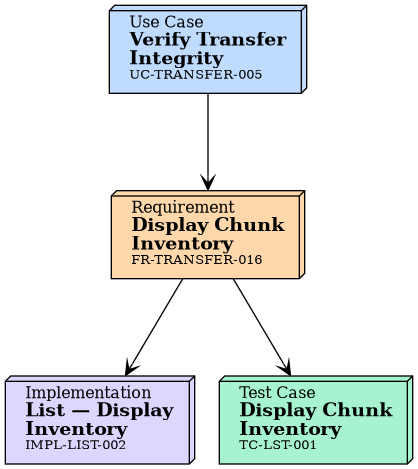

List Operation

ID |

Priority |

Title |

|---|---|---|

must |

Display Chunk Inventory |

|

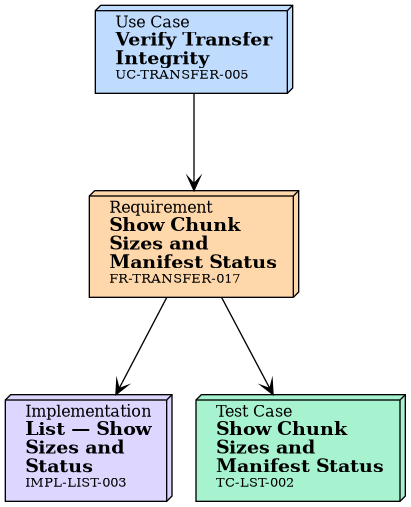

must |

Show Chunk Sizes and Manifest Status |

|

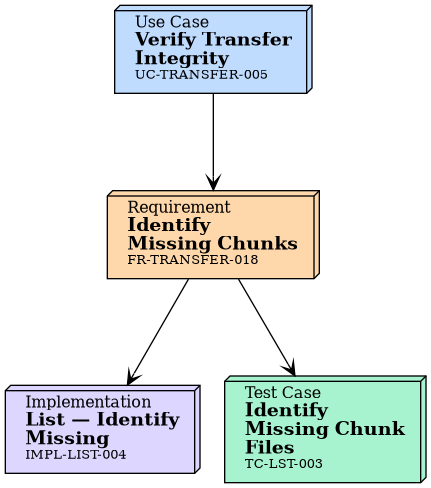

should |

Identify Missing Chunks |

|

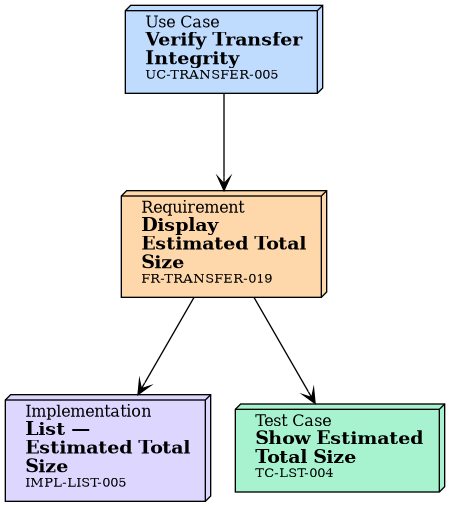

should |

Display Estimated Total Size |

|

must |

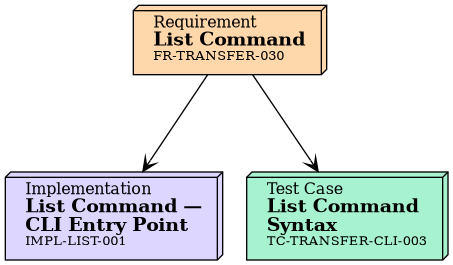

List Command |

|

should |

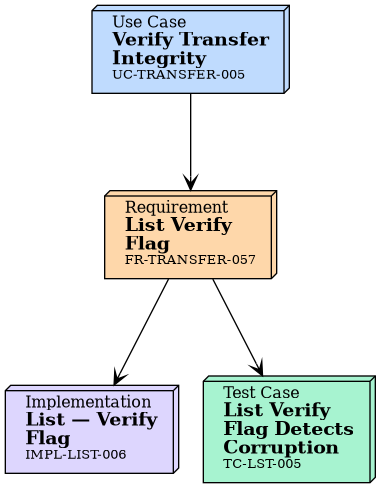

List Verify Flag |

Display chunk inventory from manifest |

Display each chunk’s size and manifest status (pending, in_progress, completed, or failed) as recorded in the manifest. This does not perform live checksum verification. |

Check file presence for each chunk listed in the manifest and flag missing files. Corruption detection requires |

Display estimated total size after reconstruction |

|

Integrity Verification

ID |

Priority |

Title |

|---|---|---|

must |

Verify Chunk Checksums Before Unpack |

|

must |

Show Chunk Sizes and Manifest Status |

|

must |

Generate Checksums |

|

must |

Verify Checksums During Unpack |

|

must |

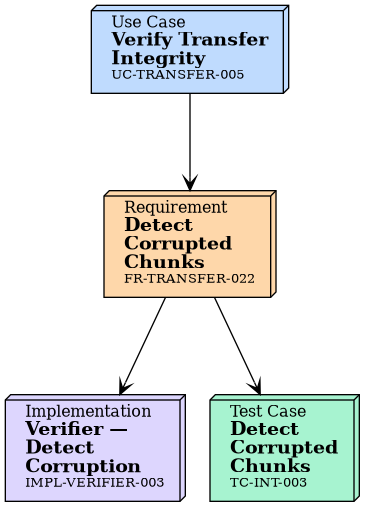

Detect Corrupted Chunks |

|

should |

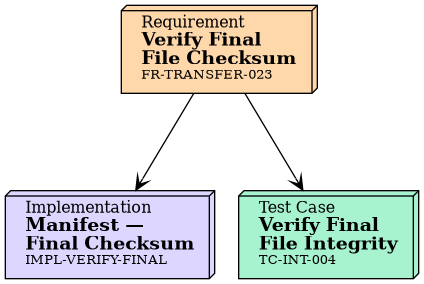

Verify Final File Checksum |

|

must |

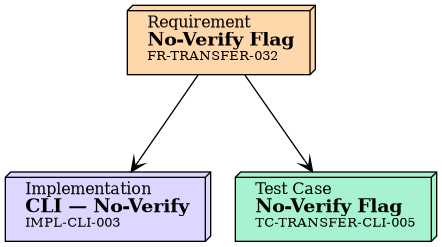

No-Verify Flag |

|

should |

List Verify Flag |

Generate checksums during pack using the configured hash algorithm (default: SHA-256) |

Verify checksums during unpack |

Detect corrupted chunks and report errors |

Verify final reconstructed file against original checksum |

Cryptographic Agility

ID |

Priority |

Title |

|---|---|---|

must |

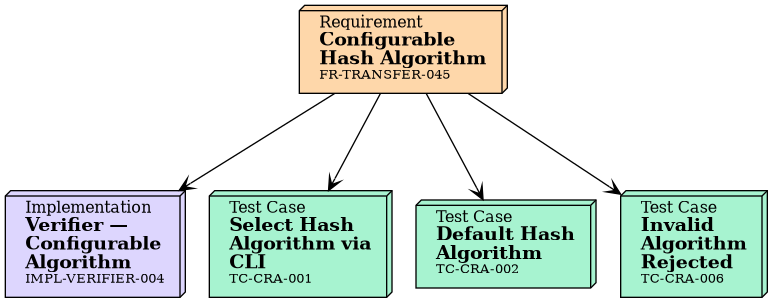

Configurable Hash Algorithm |

|

must |

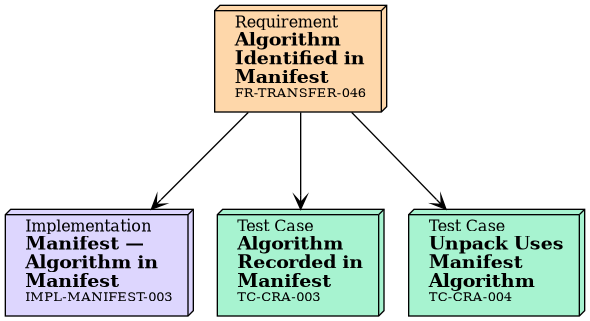

Algorithm Identified in Manifest |

|

must |

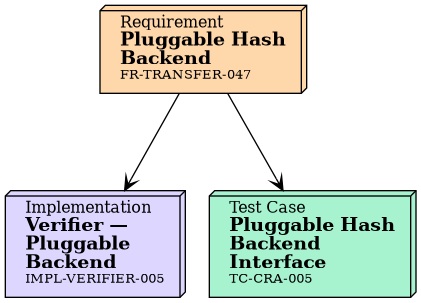

Pluggable Hash Backend |

|

should |

AEAD Algorithm Default and Agility |

The system SHALL allow users to select a hash algorithm via CLI flag ( |

The manifest SHALL record which hash algorithm was used, so unpack can verify with the correct algorithm. |

The hash module SHALL use a trait-based interface so new algorithms can be added without modifying existing code. |

State Management

ID |

Priority |

Content |

|---|---|---|

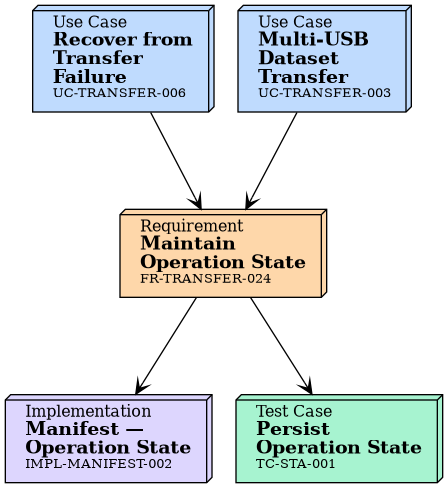

must |

Maintain operation state in manifest file |

|

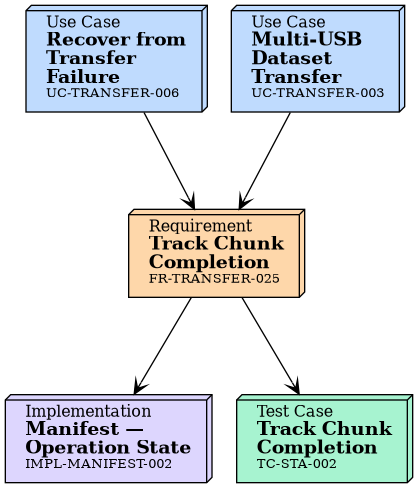

must |

Track chunk completion status |

|

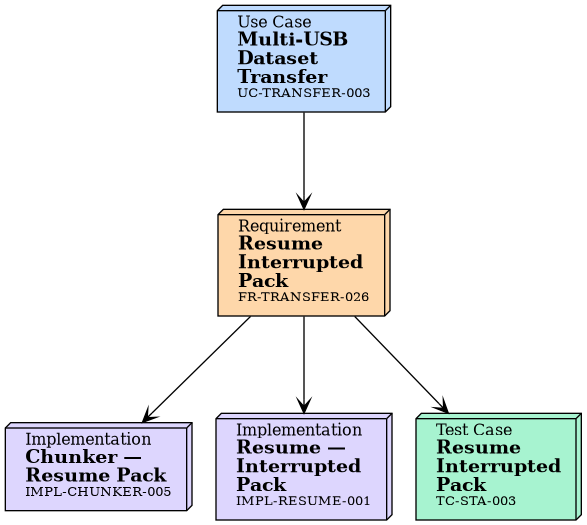

should |

Support resume for interrupted pack operations |

|

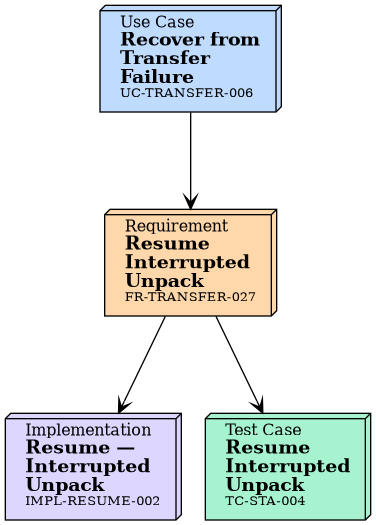

should |

Support resume for interrupted unpack operations |

Maintain operation state in manifest file |

Track chunk completion status |

Support resume for interrupted pack operations |

Support resume for interrupted unpack operations |

Command Interface

ID |

Priority |

Title |

|---|---|---|

must |

Pack Command |

|

must |

Unpack Command |

|

must |

List Command |

|

must |

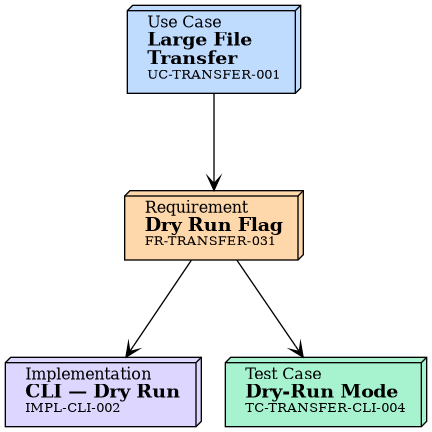

Dry Run Flag |

|

must |

No-Verify Flag |

|

should |

Chunk Size Flag |

|

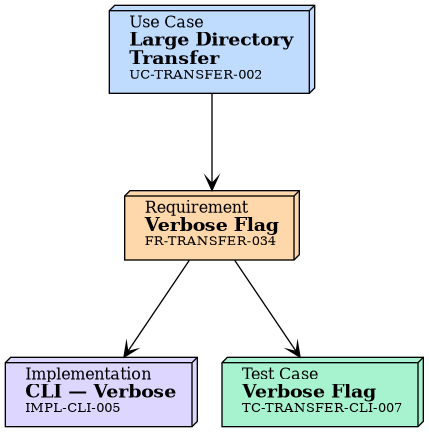

should |

Verbose Flag |

|

must |

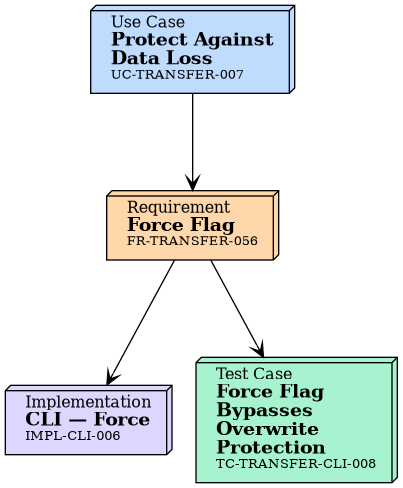

Force Flag |

|

|

|

|

Checksum verification SHALL be enabled by default for all operations. The |

|

|

|

Error Handling

ID |

Priority |

Content |

|---|---|---|

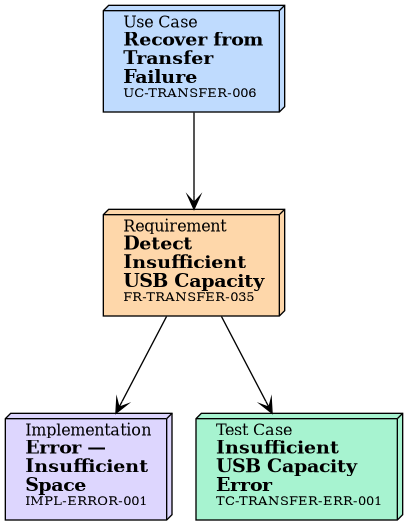

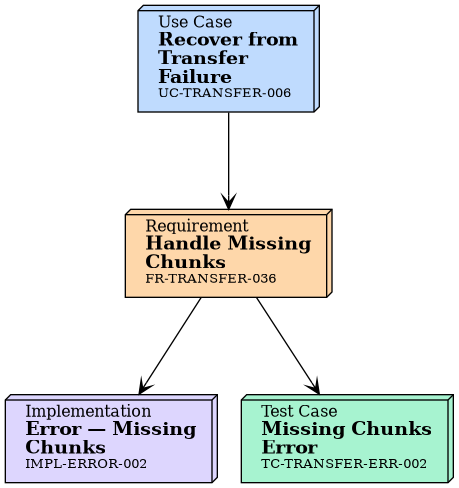

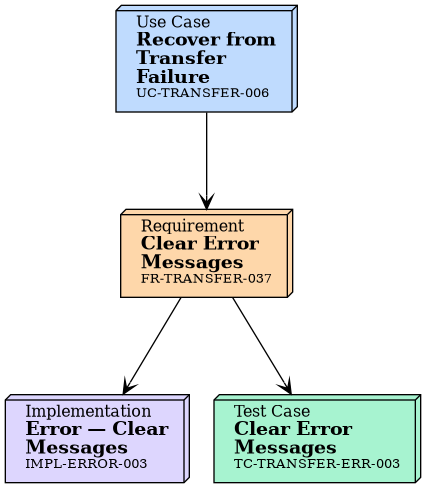

must |

Detect corrupted chunks and report errors |

|

must |

Detect and report insufficient USB capacity |

|

must |

Handle missing chunks gracefully |

|

must |

Provide clear error messages with suggested actions |

Detect and report insufficient USB capacity |

Handle missing chunks gracefully |

Provide clear error messages with suggested actions |

Safety Features

ID |

Priority |

Content |

|---|---|---|

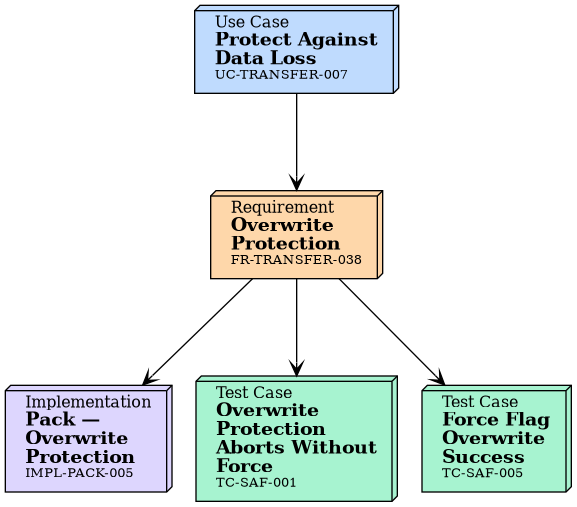

must |

The system SHALL abort with an error when the destination contains existing data: for pack, an existing manifest file; for unpack, a non-empty destination directory. The error message SHALL suggest ``--force`` to bypass this check. |

|

must |

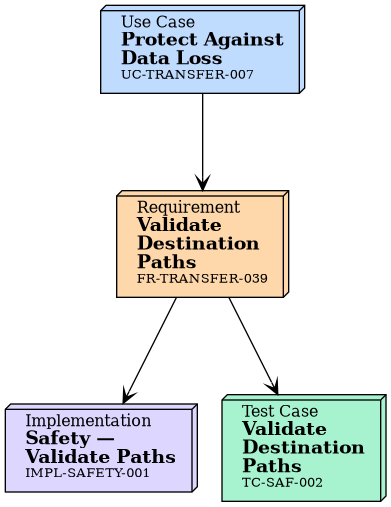

Validate destination paths and permissions |

|

must |

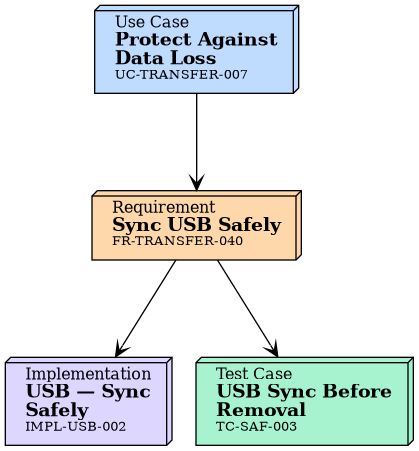

Safely sync USB before prompting for removal |

|

should |

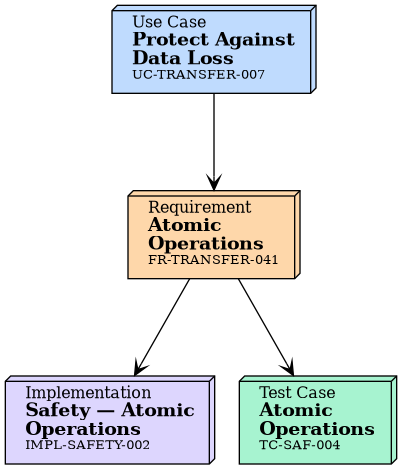

Atomic operations where possible |

|

must |

``--force`` flag on pack and unpack commands to bypass overwrite protection (FR-TRANSFER-038). |

The system SHALL abort with an error when the destination contains existing data: for pack, an existing manifest file; for unpack, a non-empty destination directory. The error message SHALL suggest |

Validate destination paths and permissions |

Safely sync USB before prompting for removal |

Atomic operations where possible |

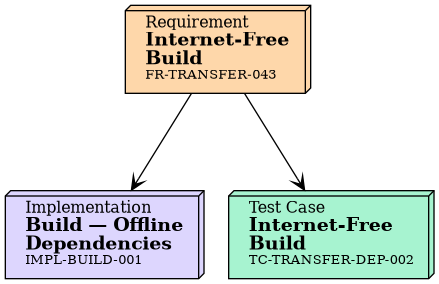

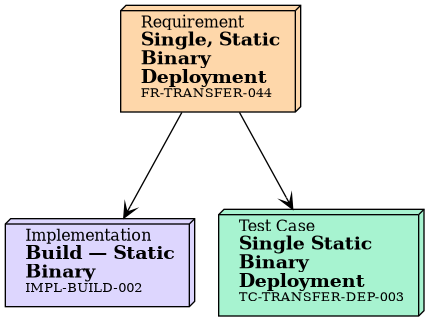

Deployment

ID |

Priority |

Content |

|---|---|---|

must |

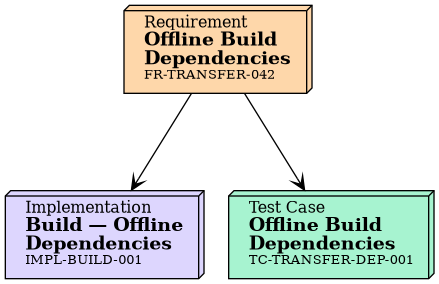

All dependencies available for offline build |

|

must |

Build process works without internet after initial setup |

|

should |

Single, static binary deployment |

All dependencies available for offline build |

Build process works without internet after initial setup |

Single, static binary deployment |

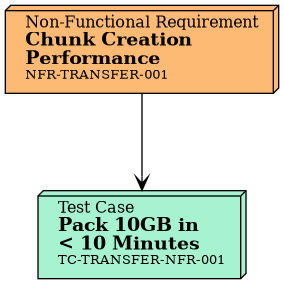

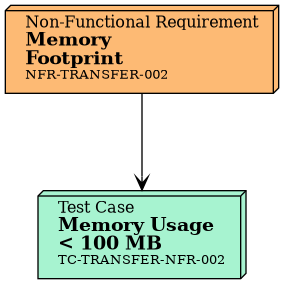

Non-Functional Requirements

ID |

Priority |

Content |

|---|---|---|

should |

Chunk creation time < 10 minutes for 10GB dataset |

|

must |

Memory footprint < 100 MB during streaming operations |

|

must |

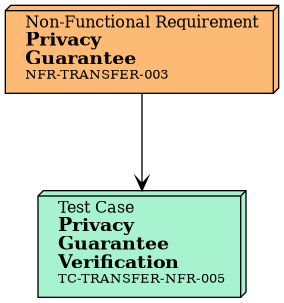

All data stays on local/removable media; no network calls |

|

must |

100% functional offline |

|

must |

Build and run on systems with no internet access |

|

must |

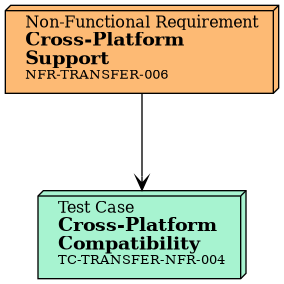

Support macOS, Windows, Linux |

|

must |

The system SHALL verify all chunks using the hash algorithm specified in the manifest before reconstruction |

|

must |

Pack and unpack operations SHALL be idempotent (safe to run multiple times) |

|

must |

The system SHALL handle interruptions gracefully (Ctrl+C, system shutdown) and allow resume |

|

must |

The system SHALL detect and report data corruption via checksum mismatch |

|

must |

Progress indicators SHALL be shown for all operations taking longer than 2 seconds |

|

must |

Error messages SHALL include specific details about the failure and suggested fixes |

|

must |

The CLI SHALL provide help text accessible via --help for all commands |

|

should |

First-time users SHALL be able to transfer a file within 5 minutes using provided examples |

|

must |

The codebase SHALL achieve at least 80% test coverage |

|

must |

All public APIs SHALL have rustdoc documentation |

|

must |

The code SHALL pass cargo clippy with zero warnings |

|

must |

The code SHALL be formatted with rustfmt |

|

should |

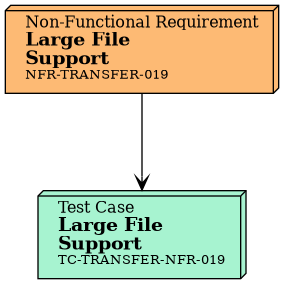

The system SHALL handle files up to 100GB in size |

|

must |

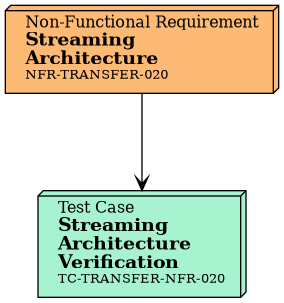

Chunk operations SHALL use streaming architecture to handle files larger than available RAM |

|

could |

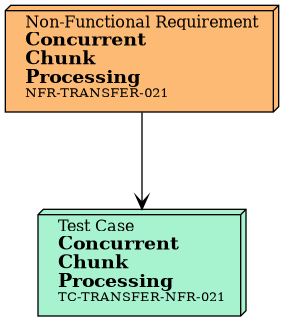

The system SHOULD support concurrent chunk verification to improve performance |

|

must |

The system SHALL be designed for cryptographic agility: hash algorithms are pluggable via a common trait interface, enabling adoption of new standards (e.g., post-quantum algorithms) without architectural changes. |

|

must |

The system SHALL NOT write passphrases or derived keys to disk, logs, or the manifest in plaintext. Passphrases SHALL be read from an interactive terminal prompt (with echo disabled) or from a file descriptor, and SHALL be zeroized from memory after key derivation completes. |

Performance

Chunk creation time < 10 minutes for 10GB dataset |

Memory footprint < 100 MB during streaming operations |

Reliability

The system SHALL verify all chunks using the hash algorithm specified in the manifest before reconstruction |

Pack and unpack operations SHALL be idempotent (safe to run multiple times) |

The system SHALL handle interruptions gracefully (Ctrl+C, system shutdown) and allow resume |

The system SHALL detect and report data corruption via checksum mismatch |

Usability

Progress indicators SHALL be shown for all operations taking longer than 2 seconds |

Error messages SHALL include specific details about the failure and suggested fixes |

The CLI SHALL provide help text accessible via –help for all commands |

First-time users SHALL be able to transfer a file within 5 minutes using provided examples |

Maintainability

The codebase SHALL achieve at least 80% test coverage |

All public APIs SHALL have rustdoc documentation |

The code SHALL pass cargo clippy with zero warnings |

The code SHALL be formatted with rustfmt |

Portability

Support macOS, Windows, Linux |

Scalability

The system SHALL handle files up to 100GB in size |

Chunk operations SHALL use streaming architecture to handle files larger than available RAM |

The system SHOULD support concurrent chunk verification to improve performance |

Security & Privacy

All data stays on local/removable media; no network calls |

The system SHALL be designed for cryptographic agility: hash algorithms are pluggable via a common trait interface, enabling adoption of new standards (e.g., post-quantum algorithms) without architectural changes. |

Deployment

100% functional offline |

Build and run on systems with no internet access |

Appendix: Design Conventions

The transfer manifest ( {

"version": "1.0",

"operation": "pack",

"source_path": "/path/to/source",

"total_size_bytes": 10737418240,

"chunk_size_bytes": 1073741824,

"chunk_count": 10,

"hash_algorithm": "sha256",

"chunks": [

{

"index": 0,

"filename": "chunk_000.tar",

"size_bytes": 1073741824,

"checksum": "sha256:abc123...",

"status": "completed"

}

],

"created_utc": "2026-01-04T12:00:00Z",

"last_updated_utc": "2026-01-04T12:15:00Z"

}

The Rationale. JSON was chosen because it is human-readable for debugging in

air-gap environments where tooling may be limited, requires no additional

parser dependency beyond |

Rationale. Three-digit zero-padding supports up to 1000 chunks, which is

sufficient for most transfers given configurable chunk sizes. The |